We are Egeniq. Nice to meet you!

The days that the Internet was all about websites are long gone. Smart apps that you can talk to. That is what you want to offer. On every mobile device. From yesterday’s smartphone to the newest wearables. We combine a high level of knowledge with extensive practical experience and love to share them with you. Each and every day again. On the platform of your choice.

Clients in search of innovative mobile technology, like Pathé, RTL Nederland, Arsenal FC, Schiphol, NPO, Nederlandse Loterij en BNR nieuwsradio, already knew how to find us. Will you follow in their footsteps?

Services

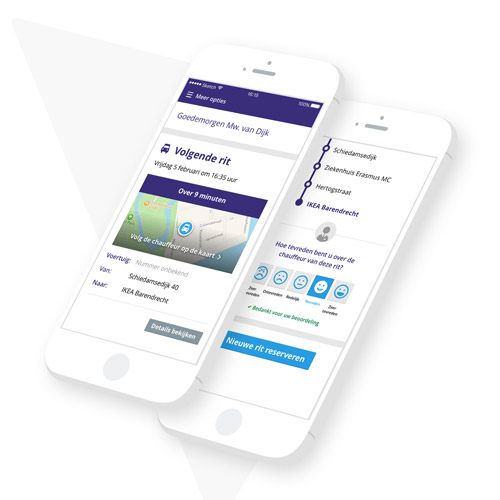

You are looking for a company that develops exciting apps, responsive websites, and backend APIs. Voice-steered of course, because typing is so 2018! Well, you have come to the right place. Egeniq can take care of the entire development trajectory, or support your own development team. Even other app developers are welcome. Together we know and can do more.

Want to share your ideas with us or have another question? Please contact us via the contact form. Request a quote for your app? Fill out the quote form and we will process your request immediately, after which we will contact you as soon as possible.

Technology

A lot of knowledge and pure craftsmanship. That is what it’s all about. Together with extensive expertise and an almost uncontrollable desire to share it. From responsive websites to voice-steered Google Assistant apps. It is our limitless ambition that makes Egeniq different. Better. It enables us to make crystal-clear analyses of what should be done for our clients. And to suggest and deliver the best solutions. Not only in terms of the right app, but also when it comes to architecture and consultancy.

Way of working

The world is changing every nano-second, bit after bit. And so are our software engineers. Their collective knowledge and practical experience are constantly growing, day after day. In designing the perfect app for you, no challenge is too big for them.

Some Brands We Work For

Cases

From the work for our clients in recent years, we have selected some cool cases.

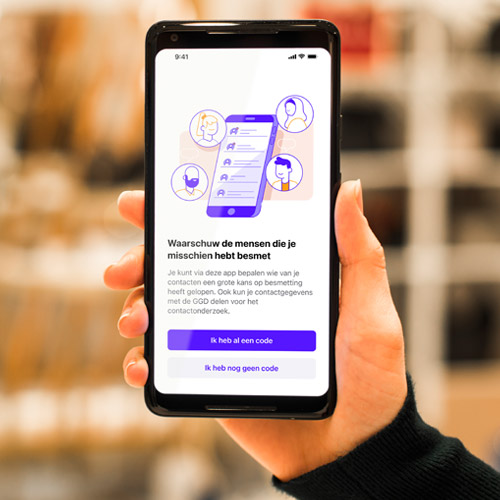

Digital support for source and contact research

Ministerie VWS

Listen to our team members

Egeniq regularly hosts events. Or presents speakers. You see, our team members not only do a great job, they can also talk about it really well and they love to share their expertise. Appdevcon is one of Egeniq’s events. A mobile technology conference for and by developers. Want to join our events? Of course, you can!